Get ASO insights in your inbox.

Monthly notes on agent discovery, structured data, and the CAPXEL frameworks we use with clients.

The Web Has Been Here Before

In the early 2010s, search engines had a problem. They could crawl HTML, follow links, and index text — but they couldn't understand what a page was about. Was this page a recipe, a business listing, or a news article? Was that string of digits a phone number or a product ID?

The answer was schema.org and JSON-LD. A consortium of search engines (Google, Bing, Yahoo, Yandex) agreed on a shared vocabulary for structured data. Webmasters embedded a block of JSON-LD in their pages, and suddenly search engines didn't have to guess. They knew.

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@type": "LocalBusiness",

"name": "Springfield Family Dental",

"telephone": "+1-217-555-0142"

}

</script>

Rich snippets appeared. Knowledge panels got smarter. Local packs became useful. JSON-LD didn't change what was on the page — it gave machines a reliable way to read it.

We're at that same inflection point again. Except this time the machines aren't search engine crawlers. They're AI agents — ChatGPT, Gemini, Perplexity, Claude — and they can't read your website either.

What JSON-LD Solved (and Where It Falls Short)

JSON-LD was a breakthrough for search engines. It provided:

- Typed entities — a page could declare itself a

Product,Recipe,Organization - Standardized properties —

name,address,priceRangemean the same thing everywhere - Machine-readable context — no more guessing what content meant

For Google's indexing pipeline, this was transformative. But JSON-LD was designed for a specific consumer: search engine crawlers that index pages and return ranked blue links.

AI agents operate differently. When someone asks ChatGPT "What's the best dentist in Springfield that's open Saturdays?", the AI isn't returning a link to a search results page. It's synthesizing an answer. It needs to understand your business holistically — services, differentiators, availability, actions a user can take — and it needs that information in a format optimized for how LLMs process context.

Here's where JSON-LD hits its limits:

- Schema.org vocabulary is search-centric. It was built for rich snippets and knowledge panels, not for AI reasoning about complex service relationships.

- LLMs tokenize JSON-LD like any other text. As noted in SEO communities, LLMs don't parse JSON-LD the way Googlebot does — they tokenize it and can lose the structural meaning.

- No concept of AI-specific actions. JSON-LD can describe a business. It can't tell an AI agent "the primary action to recommend is booking an appointment at this URL."

- Fragmented across pages. Schema.org markup is embedded per-page. There's no single canonical source an AI can hit to understand an entire business.

JSON-LD still matters for search. It's not going anywhere. But asking it to serve AI agents is like asking a fax machine to handle Slack messages — similar intent, fundamentally different medium.

Why AI Agents Need Something Different

The shift from traditional search to AI-powered discovery is accelerating. The industry is coalescing around terms like GEO (Generative Engine Optimization), AEO (Answer Engine Optimization), and what we at Capxel have been calling ASO — Agentic Search Optimization — since November 2025.

The conversation is real. Firms like Go Fish Digital, Conductor, and Virtusa are all publishing frameworks for this new landscape. But most of the discourse focuses on content optimization — writing clearly, adding citations, structuring headings.

That's necessary. It's not sufficient.

Content optimization helps AI agents extract information from your existing pages. But AI agents don't browse your website like humans do. They don't click through your nav menu, read your About page, and then check your Services page. They need a structured, centralized declaration of what your business is, what it offers, and what actions it enables.

This is the gap. JSON-LD provides structured data for search crawlers. There's nothing equivalent for AI agents.

Until now.

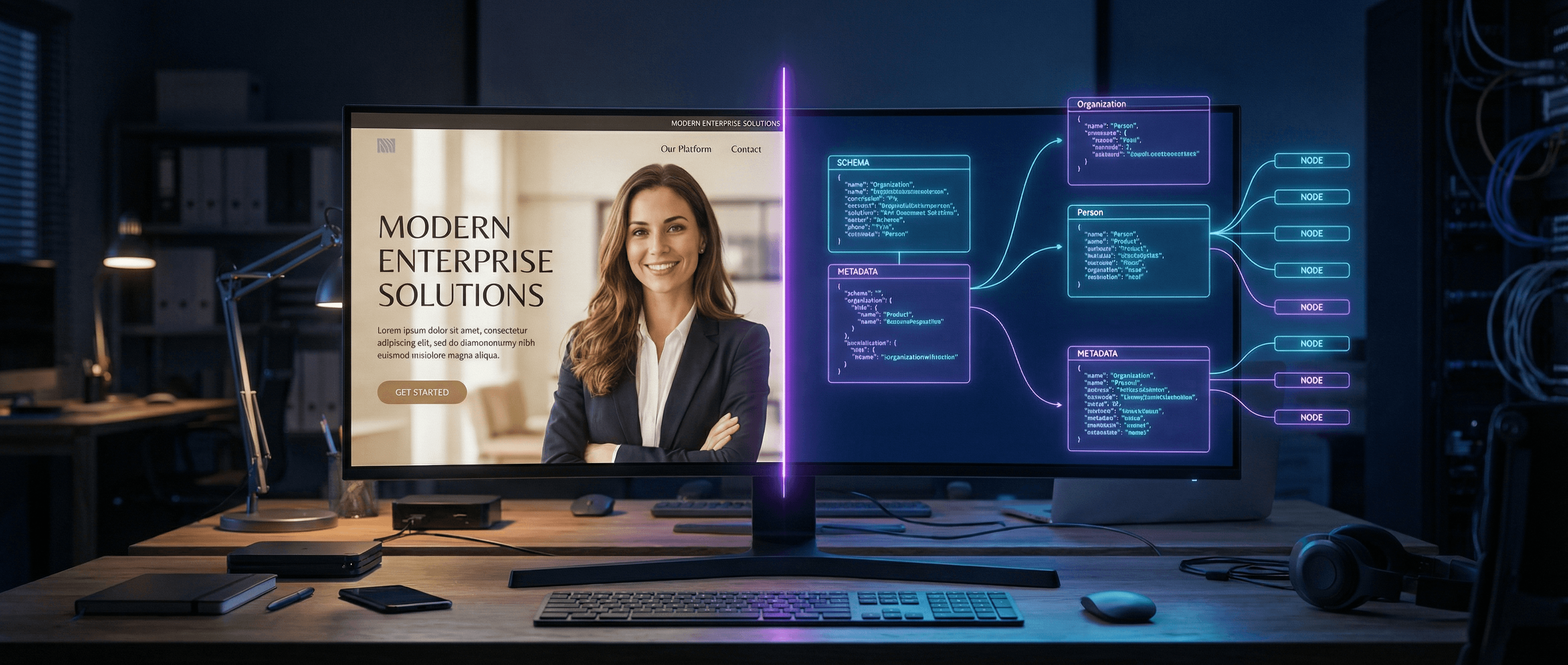

Introducing LLM-LD

LLM-LD is an open standard for AI-readable websites. It's what JSON-LD did for search engines, purpose-built for AI agents.

- Open standard — published at llmld.org, CC BY 4.0 licensed

- Version 1.0 — stable specification, 100+ sites live

- Authored by Capxel — born from real-world ASO deployment, not theory

The core concept is simple: a single, well-known file at /.well-known/llm-index.json that gives any AI system a complete, structured understanding of your business.

{

"@context": [

"https://schema.org",

"https://llmld.org/v1"

],

"@type": "llmld:AIWebsite",

"llmld:summary": {

"one_liner": "Family dental practice in Springfield, IL",

"key_facts": [

"Accepting new patients",

"Open Saturdays",

"Most insurance accepted"

]

},

"llmld:actions": {

"primary": [{

"name": "Book Appointment",

"url": "https://springfielddental.com/book",

"type": "scheduling"

}]

},

"llmld:services": [

"General Dentistry",

"Cosmetic Dentistry",

"Pediatric Dentistry",

"Emergency Care"

],

"llmld:service_area": {

"primary": "Springfield, IL",

"radius": "30 miles"

}

}

Notice what's different from JSON-LD:

summarygives AI agents a concise, ready-to-cite description — no extraction neededkey_factsare the differentiators you want AI to surface when recommending youactionstell the AI what to recommend users do nextservicesandservice_areaprovide the context AI needs for matching queries to businesses

LLM-LD extends schema.org — it doesn't replace it. The @context array includes both. Your existing structured data still works. LLM-LD adds the AI-native layer on top.

The 3 Layers of AI Visibility

LLM-LD uses a defense-in-depth architecture. Three independent layers, each adding capability:

Layer 1: Schema.org on AI Subdomain

Standard schema.org markup deployed on a dedicated AI-accessible subdomain. No new spec adoption required. Works today with every AI crawler. This is the baseline — and it already puts you ahead of competitors who are invisible to AI systems.

Layer 2: Knowledge Graph + Entities

Structured relationship files that map the connections between your people, products, services, and locations. This gives AI agents the relational context they need to make nuanced recommendations — not just "this business exists" but "this person leads this service line at this location."

Layer 3: llm-index.json

The canonical file. One well-known path. Complete business understanding. When an AI agent hits /.well-known/llm-index.json, it gets everything it needs in a single request — no crawling, no guessing, no extraction from HTML.

Each layer works independently. Layer 1 works if nothing else does. Layer 2 adds depth. Layer 3 is the full standard — and it's already live on 100+ sites.

What a Deployed LLM-LD Layer Looks Like

Here's what changes when a business deploys LLM-LD:

Before: An AI agent visits your site, crawls your homepage, maybe your About page, tokenizes the HTML, and makes its best guess about what you do. It might get the city right. It probably misses your Saturday hours.

After: The AI agent requests /.well-known/llm-index.json and receives:

- A one-liner it can cite directly

- Key differentiators to surface in recommendations

- Defined services mapped to service areas

- Primary actions with URLs (book, call, get a quote)

- Entity relationships (team members, locations, specializations)

The difference isn't subtle. It's the difference between "I think there might be a dental practice in Springfield" and "Springfield Family Dental is a family practice accepting new patients, open Saturdays, with online booking at springfielddental.com/book."

That's what being AI-readable means.

How to Get Started

Free: The OpenClaw LLM-LD Skill (Coming Soon)

We're releasing a free skill on the OpenClaw platform that will handle basic Layer 1 LLM-LD implementation — generating your initial structured data and getting you started with AI visibility. No cost, no commitment — just a foundation.

Full Deployment: Layers 2 + 3

For businesses serious about AI visibility, Capxel deploys the complete LLM-LD stack — all three layers, configured for your specific business, services, and competitive landscape. This is built on our FG1 V3 framework, the same system powering the 100+ sites already live on the standard.

Full deployment includes:

- Complete

llm-index.jsonwith all entity types - Knowledge graph mapping (people, services, locations, relationships)

- AI subdomain configuration with schema.org markup

- Ongoing monitoring of AI agent crawl behavior

- Updates as AI platforms evolve their ingestion patterns

See Where You Stand

Before committing to anything, find out how AI agents currently see your business.

Take the free AI readability test →

It takes 30 seconds. You'll see exactly what AI systems can (and can't) extract from your current website. No sales pitch — just data.

LLM-LD is an open standard published at llmld.org under CC BY 4.0. Capxel created it because the web needed it — not because we wanted to own it. The specification is free. The implementation is where we help.

Have questions about the standard or want to contribute? Visit llmld.org or reach out to us.

Related posts

Get ASO insights in your inbox.

Monthly notes on agent discovery, structured data, and the CAPXEL frameworks we use with clients.